Celo Discord Validator Digest #12

Restarting validator node and downtime penalty, proposal to add Zcash to the Celo Reserve, release process for core smart contracts, long block time, attestation service issue and useful info.

One of the troubles we have faced as a validator for Celo is keeping up with all the information that comes up in the Celo's Discord discussions. This is especially true for smaller validators whose portfolios include several networks. To help everyone stay in touch with what is going on the Celo validator scene and contribute to the validator and broader Celo community, we have decided to publish the Celo Discord Validator Digest. Here are the notes for the period of 6-19 July 2020.

Discussions

Restarting validator node and downtime penalty

BisonD noted that it was impossible to have your validator re-connected to a proxy node in under 60 seconds after a restart:

... I have spent some time experimenting with techniques for getting a Validator to restart in under 60 seconds (to avoid score dinging) and at the moment cannot get the Validator to reconnect to the Proxy in this timeframe. It takes about 65 seconds after a new Validator instance comes up before it properly connects to the Proxy and starts signing again.

...

In my test setup I have the proxy and validator each in their own container (basically). I kill the process in the validator container and wait for it to get restarted, which it does really quickly however, the proxy continues to complain that there is no validator, and the validator is there .. just sort of stalling until it finally connects and starts signing.

~65 seconds total, which is too long to avoid score penalty.

@Peter [ChainLayer.io]: afaik the certificate exchange takes place only once a minute, that might explain this as well.

@alchemydc | zanshindojo: IMO uptime/score should be calibrated to have impact in relation to the risk it poses to network consensus. Not sure it's calibrated properly with present thresholds. The score impact at 12 missed blocks seems like overkill.

@zviad | WOTrust | celovote.com: Overall the big issue right now is that when you restart your validator it can have negative affect on network.

So I think 12 block penalty does make sense, and we should wait for true HA solutions instead.

While consensus only requires 2/3 of validators to be up, block proposer needs to be up to propose a block, otherwise block will get delayed by extra 5 seconds.

So if everyone is taking small downtime frequently, it will lead to more instances when blocks are no longer resolved in 5 seconds and instead take 10 seconds.

If CELO truly becomes higher transaction per second network, those delays can become problematic (as an example, delayed blocks can already cause unexpected issues if you are doing arbitrage on an exchange to stabilize cUSD).

@BisonD: The issue for me is this: the protocol currently does not seem to have a mechanism that allows a validator to restart without penalty.

@zviad | WOTrust | celovote.com: Yeah, I agree on that point. my opinion is that instead of reducing the penalty we should just wait for true HA setup, that will allow you to restart validators without missing even a single block in steady state.

Until then, instead of changing the penalty (which isn't really changeable anyways easily), we just have to workaround it either by doing key rotation, or taking small uptime hits.

: Hi All.. I see you're talking about validator failover/redeploys. I've built a model to help me understand the cost of downtime, since it affect the scores and with that the rewards. And scores don't increase really fast... so it will affect rewards on subsequent epochs. Here's the link https://docs.google.com/spreadsheets/d/1ZNWrELtQvfxIA103D_Lu_Xb6XGk4kK0j6SWE_5ihWj4/edit#gid=0

On strategies:

For planned downtime, i think the best option for now is key rotation. Have 2 validators with 2 different keys. Switch on epoch change. This option won't suffer any downtime, but not ideal for unplanned downtimes.

Another option, that I believe it's simple, but haven't really tested is to use the

stopMining()andstartMining()rpc calls. If you have validator A (active) and B (the new one). You launch B withmining=false, whenBis synced and ready to start validator, you callstopMiningonA, wait for a new block and callstartMiningonB. This should work, my only concern is theannounceprotocol, that i need to check.Finally, it's true that on #protocol-security-eng we are working on schemes to improve this; and have automatic failover. But we are currently on the design phase. If you're interested in this, you can check https://docs.google.com/document/d/1VO0DHkcNHfxOHO0pFh9cYg8zEKp_VXxaYEXWxvJ9Db8/edit?usp=sharing and https://docs.google.com/document/d/1_Hxg_xB-VF4v_-P8Woi_uqBQr7P40WpxJXj8ZMXovf0/edit?usp=sharing

@chris-chainflow: Coming back to the conversation @BisonD started about uptime, my general thought is that the 1 min downtime hit feels overly-restrictive. I feel that an uptime requirement should be reasonably calibrated to the impact downtime has on the network as a whole, i. e. liveness and/or security impact.

Being subject to the strict requirement, while the network only supports a single proxy configuration feels quite inconsistent. While we hear that the multi-proxy option is "coming soon", real world circumstances, like this upgrade, put a heavy burden on the validators to compensate for the protocol shortcoming. Also, as acknowledged above, the key rotation feels like an expensive solution to avoiding the uptime hit.

My suggestion would be to relax the 1 minute downtime hit parameter, at least until multi-proxy support is available. Over the long term, I feel uptime should be measured over a longer window, which can then be calibrated to the impact on the actual impact on the network.

As a related point, I believe, at least to date, validators are generally a responsible set of actors, who value their reputation. This in and of itself is motivating enough to help ensure we maintain the highest levels of uptime we're able to maintain, given the resources available to us.

Proposal to add Zcash to the Celo Reserve

alchemydc | zanshindojo put forward a proposal to add Zcash to the Celo reserve:

Greetings fellow community members. Congratulations to all on mainnet liftoff, the activation of the stability protocol, and the engaged and vibrant community. I'm reaching out to get feedback on this proposal to add Zcash (ZEC) to the Celo Reserve. I believe that this will promote engagement between the Zcash and Celo communities, which are ideologically very much aligned. Down the track I hope to encourage development of automated, trustless bridges between the two protocols, which would enable automated, transparent management of the Zcash component of the reserve. I also believe diversifying the Celo Reserve with ZEC will help to mitigate the extent to which the reserve is presently exposed to Ethereum risk. This is my first proposal, so appreciate pointers for navigating the process, as well as feedback on how to improve the proposal. Thanks!

https://github.com/alchemydc/celo-proposals/blob/master/CGPs/0008.md

@Patrick | Validator.Capital: I support this idea because the Celo network needs 'shielded transactions' in order to reach full potential. Many businesses and individuals are going to be hesitant to adopt a payment system that leaks sensitive financial data. Diversifying the reserve and working more closely with the ZEC community is a step in the right direction.

@Thylacine | PretoriaResearchLab: To what extent is ZEC un- or negatively correlated with ETH to mitigate the Reserve's current exposure to ETH? I support privacy centric coins and it's a shame they don't have a higher mindshare in the community (I had a couple of tiny contributions in the Mastering Monero book), but there may be branding concerns with associating too strongly with privacy coins at this early stage. I wish it wasn't the case but yeah...

@alchemydc | zanshindojo: Appreciate the feedback. You are spot on that ZEC and ETH are highly correlated from a price perspective. The risk mitigation I'm speaking about has more to do with ZEC being a Bitcoin fork rather than an Ethereum fork, and thus reduces risk presented by upstream bugs, and also the reduced vulnerability surface that comes with omitting a Turing complete VM.

With respect to your concerns that adding a "privacy coin" to the Reserve would create bad optics for the Celo project, I suggest a fairly significant reframing. I believe that Zcash is no more a privacy coin than Netscape was a "privacy browser" when it introduced SSL to the web in the 1990's. This encryption layer was necessary in order to make the web usable for e-commerce and everything after. As nations start putting together plans for Central Bank Digital Currencies, it's clear that fully transparent public blockchains are nonstarters. This sentiment has been expressed recently by both Jerome Powell (chair of the Fed) as well as Christopher Giancarlo (former CFTC, now working on the Digital Dollar Project [https://www.digitaldollarproject.org/])

The Zcash project has worked with regulators, law enforcement and policy makers since the beginning, in order to build trust and understanding. Regulators fear and ban what they don't understand, so it was important to get out in front of this and educate, to win their hearts and minds.

Zcash was explicitly approved by NYDFS prior to being added to Gemini, and is also traded on Coinbase, Binance (including Binance USA) and Kraken.

This report by the Rand Corp went deep, looking at the use of cryptocurrencies on dark markets, and concludes that Zcash is not inordinately popular on dark markets: https://www.rand.org/pubs/research_reports/RR4418.html

@Thylacine | PretoriaResearchLab: Thanks these are great points regarding the so-called "privacy" (don't know a better word at this point), and good links. To be clear, it's not that I personally think it could be bad optics, but the "general"/uniformed reader might. The point around technical/protocol diversification makes good sense though. But why ZEC over, say LTC, XMR, or (I have no horse in the one true Bitcoin wars) BCH or something else with a fairly stable codebase and history? Would this addition to the reserve imply the community is long on the actual protocol features itself of ZEC? I've no problem if that's what is being proposed, just raising the conversation.

@alchemydc | zanshindojo: Spot on. I agree that there still exists stigma around so called "privacy coins", and that it will take time for people to appreciate the privacy+confidentiality != "anonymity".

Why ZEC instead of LTC? Well, looking at the stated goals from the Reserve FAQ, "any new reserve assets under consideration should be freely traded and settled 24/7 on liquid markets, and should be based on an open-source protocol", so LTC meets those requirements. That said, I find that LTC underwhelming, lacking meaningful differentiation versus other projects.

Why ZEC instead of XMR? Good question. I like XMR and believe it to be sufficiently liquid, but I think it may lack sufficient (regulated) exchange support to give the Reserve administrators adequate confidence. I'll let @Markus | cLabs and others that have more expertise than I in this domain speak to that.

Why ZEC instead of BCH or BSV or $fillintheblank? I think that the strong ideological alignment between the Zcash project and the Celo project is the primary answer to that question. I don't have much interest in the Bitcoin forks you mentioned, not because of any religious polarization, but simply because I think they lack meaningful differentiation and a mission orientation.

As to whether the community is long on the protocol features of Zcash, I'll let the community answer that question. I will say that I am pleased to see Plumo leverage zkSNARKs, and also to see that @Tromer, who is one of the founding cryptographers of the Zcash project, helped to design Plumo.

Release process for core smart contracts

asa | cLabs published a proposal about the release process for the core smart contracts:

ICYMI the release process for the core smart contracts is published here: https://docs.celo.org/community/release-process/smart-contracts

The proposal is to always release all smart contract changes atomically. This will make keeping track of what contracts are deployed on a given network much simpler, as we can always ensure that the deployed contracts correspond to a specific commit hash. This also ensure that the contracts remain interoperable, as we don't need to worry about keeping contracts interoperable with each other across different commits.

The implication here is that governance proposals to upgrade core smart contracts will be "all or nothing", in the sense that each time there will be a single proposal to upgrade the smart contracts from commit hash X to commit hash Y, which will contain updates to all smart contracts that had code changes between X and Y. This means that if there are controversial changes, it will be easiest (though not strictly necessary) to commit to or reject those changes before they make it into master, rather than by voting down the governance proposal. Certainly rejecting those changes is still possible by rejecting the proposal, but it will mean those specific changes that were rejected would need to then be reverted on master, and a new governance proposal be made.

Some of us at cLabs are currently working on smart contract upgradability tooling in order to make sure deploying upgrades is safe and easy, so that Celo can maintain the development velocity needed in order to respond to user feedback and needs. We're hoping to have this ready such that we can begin executing the first upgrade process within the next few weeks. Some changes that are in master already that would be batched into the first upgrade include:

Election.sol: A minor bug fix in which a value guaranteed to be zero was being subtracted unnecessarily

Governance.sol: Return

weightingetVoteRecordLockedGold.sol: Add additional

requirestatements to confirm consistency of balancesExchange.sol: Short circuit to return zero when buy/sell amount is zero

DowntimeSlasher.sol: Totally reimplemented, to allow downtime to be computed in pieces so as not to exceed the block gas limit.

I'd love to get more feedback on all of this, so that by the time the tooling is ready, we're all on the same page with respect to how core contract upgrades should be done.

@brian | stakevalley.com: The atomic approach sounds good from a technological/release management perspective.

At first glance, it raises these concerns/thoughts from a governance perspective:

If a governance proposal has all changes from X to Y, it increases the risk of "pork barrel" changes. I.e. the larger the review/batch, the more difficult it will be for reviewers to spot problematic or dangerous changes. Smaller batches increases transparency & scrutiny IMO. This to me is the biggest risk of this change as it significantly increases the odds of a bad change making it in. How will we prevent large batches hiding small bad changes (intentional or unintentional)?

If the guidance is to shift the debate to a pull request on

masterand make voting down a governance proposal more expensive, will this disincentivize the community from voting down problematic proposals b/c now rolling things back has additional cost?If the PR on

masteris where a lot of the debate will happen, we'll need to make sure there are proper notifications/timelines/transparency for the community to weigh in. Just as we do for when new governance proposals are raised.For the feedback time on the PR, is there a way acceptance/rejection could be weighted by locked gold similar to how on-chain governance works? Not sure if this is needed, but figured I'd ask.

Long block time

On July 12th, hqueue | DSRV | CeloWhale.com noticed that some blocks took much longer than usual. So far, there isn't much info on why this could happen:

It took about 45 secs for the last epoch block. Anything special with the last epoch such as lots of key rotations?

https://explorer.celo.org/blocks/1399680/transactionshttps://explorer.celo.org/blocks/1399681/transactions

@asa | cLabs: Interesting, we should dig into this. A few things it could maybe be:

Key rotations or a change in the elections meant validators were not properly peered.

Proof-of-stake actions like elections and rewards distribution are slow.

Any idea if we saw this in the previous epoch?

@hqueue | DSRV | CeloWhale.com: We observed this two times for last 30 days. Previous at 1278720-1278721 (74th epoch) (2020-07-05) and recently at 1399680-1399681 (81th epoch) (2020-07-12)

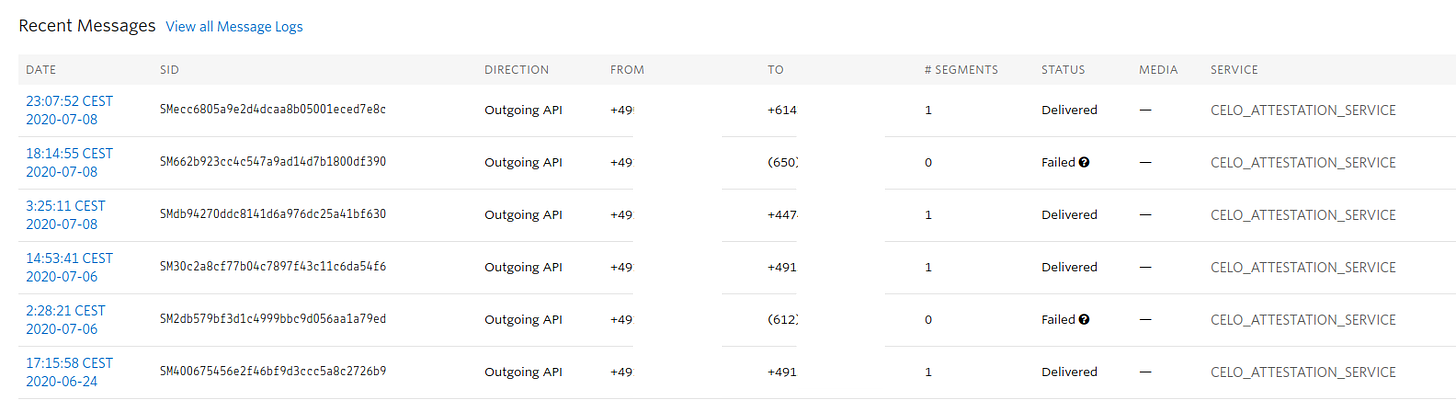

Attestation service issue

Some validators noticed that the attestation service fails to send SMS messages to "improperly" formatted phone numbers:

@Thylacine | PretoriaResearchLab: I seem to be getting consistent failures on improperly formatted recipients (XXX) YYY ZZZ instead of +XXYYYZZZ. Is there bug in the mobile app that isn't sanitizing attestation numbers?

During TGCSO, I didn't get any numbers that were formatted in this way with brackets, and I had 100% success rate during the competition, here's a sample from TGCSO.

@chorus-joe: ooo... yeah, it's like the US numbers (?) are not being internationalized.

I suspect they should all be +1 ...

@Thylacine | PretoriaResearchLab: Perhaps the mobile app is considering US numbers local and not formatting them?

@nambrot | cLabs: Hmm, that is a good question, I can check with the wallet folks. I'm relatively that all numbers are being handled with E164 though.

I suspect that Twilio might just format it that way maybe?

I just checked the source code, and it does look like on the attestation service side we validate that it is a E164 number, so I wonder if the issue is actually that in order for you to send sms to globally, you might need a different "from" phone number?

@Thylacine | PretoriaResearchLab: My attestation logs in the web service show +1650xxxxx as the post request.

So maybe there is something going on with Twilio's end...

@nambrot | cLabs: I think its a very interesting observation indeed that the originating phone number might matter when it comes to delivery. We do somewhat hint to it in the docs

To actually be able to send SMS, you need to create a messaging service under Programmable SMS > SMS. The resulting SID you want to specify under the TWILIO_MESSAGING_SERVICE_SID. Now that you have provisioned your messaging service, you need to buy at least 1 phone number to send SMS from. You can do so under the Numbers option of the messaging service page. To maximize the chances of reliable and prompt SMS sending (and thus attestation fee revenue), you can buy numbers in many locales, and Twilio will intelligently select the best number to send each SMS.We might even want to think about a way to aggregate delivery stats from all validators to find these issues more quickly.

@Thylacine | PretoriaResearchLab: So for everyone having issues sending to American numbers that don't appear formatted correctly in Twilio logs, the short answer is: just buy an American number instead. I was using German number (I specifically chose this during TGCSO for attestation geographical dispersion since many would be based on US mobile infrastructure), but for some reason it always formats the target SMS number with parenthesis and was undeliverable.

Now updated to US number, and even though the target SMS is still not formatted in E.164 international standard, SMS is delivered. I guess it's an undocumented "feature" in Twilio.

Having this behavior also caused mobile app users to repeatedly re-request the attestation SMS, causing "ERROR: Another process has already sent the SMS" because from Twilio's rest API viewpoint, the request was received and processed (and failed asynchronously later), and a record was inserted into our local postgres.

Useful info

If you spin up a new node and have trouble connecting to peers that might be caused by the fact that most of the nodes on the network have their connections maxed out:

@daithi | Blockdaemon: Is anybody else having issue getting new nodes to sync? Seem p2p discovery is only happening sometimes...

@Peter [ChainLayer.io]: I think that's because most people have maxpeers at default value which leaves basically no room from validators to connect to other peers.

Proxies should probably run with a larger maxpeers setting to allow connection to other nodes.

@kevjue | cLabs: I suspect that @Peter [ChainLayer.io] is correct. That most of the fullnodes and proxies are maxed out on the connections and new nodes are having a hard time to find a node to connect to.

aslawson | cLabs started collecting ideas on how to improve the governance process in Celo:

On a related note, I am collecting ideas for Governance 2.0 -- improvements to the governance process. Please add any ideas or +1 ideas you like. It would be great to get this community's feedback

https://docs.google.com/document/d/1_aLO9xnO6ho02BuFGTReuNsDWMEhDS4Omsc48CiN4O8/edit?usp=sharing

Recently, cLabs discovered a critical vulnerability in the code, and all validators had to upgrade their nodes in order to fix it. Here's a high-level overview of the issue:

@zviad | WOTrust | celovote.com: Quick question about the vulnerability (if you can share). As I understand from the high level description of the issue, until at least 1/3-s of the validators upgrade to a new version, a potential attack continues to exist where a sophisticated attacker, using malicious validator, can propose a fake block and have 2/3-s of the validators accept that fake block right? and basically change the history permanently since those 2/3 of the validators will form a majority. (since all validator proxies are directly peered, they all satisfy direct peering pre-requisite for the attack). Is this accurate?

@victor | cLabs: At a high level, that is accurate. One saving grace here is that any patched full-node or validator would be able to detect that this attack occurred on the chain, and would refuse to follow it. So if successful, there would be a fork at the point of attack, but patched clients would take the branch that is unaltered.

Like what we do? Support our validator group by voting for it!