Celo Discord Validator Digest #18

Migration to celo-blockhain 1.1.0, useful info and community updates

One of the troubles we have faced as a validator for Celo is keeping up with all the information that comes up in the Celo's Discord discussions. This is especially true for smaller validators whose portfolios include several networks. To help everyone stay in touch with what is going on the Celo validator scene and contribute to the validator and broader Celo community, we have decided to publish the Celo Discord Validator Digest. Here are the notes for the period of 28 September - 11 October 2020.

Discussions

Migration of Baklava nodes to 1.1.0

Following the release of the celo-blockhain 1.1.0, several validators who tried using their backup nodes for key rotation instead of spinning up completely new nodes have seen the following issue:

@Alex | Easy2Stake: And another weird error I have on Baklava since this back-and-forth with the validator (like I said before, I did some migration tests) is this one:

INFO [10-01|08:45:57.617] Error in handling istanbul message address=0x23836969F0d095AfEB652C75523F740898e0c462 func=handleEvents cur_seq=1690753 cur_epoch=98 cur_round=0 des_round=0 state="Accept request" address=0x23836969F0d095AfEB652C75523F740898e0c462 err="not an elected validator"INFO [10-01|08:45:58.074] Processing istanbul backlog type=MsgBacklog func=processBacklog cur_seq=1690753 cur_round=1 considered=44 future=0 enqueued=44INFO [10-01|08:45:58.549] Imported new chain segment blocks=1 txs=1 mgas=0.177 elapsed=29.575ms mgasps=5.998 number=1690753 hash=4a98b5…a3fe3d dirty=1.56MiBINFO [10-01|08:45:58.885] Error in handling istanbul message address=0x23836969F0d095AfEB652C75523F740898e0c462 func=handleEvents cur_seq=1690754 cur_epoch=98 cur_round=0 des_round=0 state="Accept request" address=0x23836969F0d095AfEB652C75523F740898e0c462 err="not an elected validator"...

LE: It seems that after I deleted the nodekey and let it recreate it I no longer have the error mentioned above.(edited)

@Bart | chainvibes: got the same 'Error in handling istanbul message' on Baklava after rotating to another node and giving this non-elected validator node a new address. Removing nodekey and restarting didn't help. Unfortunately one thing I notice is that it continuously (every few minutes)drops 70% of its peers and recovers which this same node didn't do earlier when it was validating on baklava. Increased maxpeers setting but that didn't help. Node is running v1.1.0.

...

LE: after it's being elected on baklava it shows no sudden drop anymore for both v1.0.1 or v1.1.0

@Alex | Easy2Stake: I guess it is related with the nodekey / ip address somehow, the other peers expecting to see your IP with another nodekey. Did your "new" validator node had anything in common with the "old" one ? ip / celo-node folder etc ?

@Bart | chainvibes: That's what I suspect to, therefore I removed the nodekey, but the ip stayed the same. We either need to know what the steps are when reusing chaindata/celo-node folder/ etc or the reason why this happens. Could help us on mainnet.

@Alex | Easy2Stake: Totally. I think the problem is when "reusing" any of these (ip / nodekey) with the validator role. But would be nice to have a confirmation.

@Bart | chainvibes: Indeed. Strange thing were the sudden drops and recovery of the total nr of peers as you can see in previous picture. This node had the --mine option but was not elected. Restarting with a new nodekey file didnt solve the sudden drops. As soon as this full node with the --mine option became an active validator everything was fine again, no drops of peers anymore.

@Alex | Easy2Stake: I'm not an expert, but theoretically speaking:

The nodekey/Ip relationship is part of the P2P network and each node on the network should have an address book keeping this information. In theory there should be a "invalidation" mechanism such as a timeout that would consider peers dead (enode@IP) and make room for another (enode@IP) where either "enode" or "IP" would be the same. I'm also guessing that whenever the network detects two peers with different enodes but the same IP address (respectively same enode / diff ip) one of the peers can/(should?) be considered invalid.

I'm simply following some logic when writing this stuff down so please don't take it as a "confirmation". I'd like to hear more on this from others as well.

LE: I also think that the sudden drops of peers are related to this address book if the following would happen:

Your node connects to peer "A"

Peer "A" have another (enode@IP) in it's address book and consider your peer "invalid".

Your node gets a "connection reset" Does this makes any sense for you guys ?

PS: For those reading this for the first time, we are exploring the "hypothesis" when starting a new celo node by keeping either the same enode or IP address.

@20/20 | Virtual Hive: cannot confirm any of your theories but there are these kind of things happening in baklava and mainnet. Whenever you see some validators missing blocks every 100 blocks, it should usually be caused by a connection loss between 2 (or even more) validators, so this is seen pairwise. We had this problem a while ago when our validator failed to sign a couple of blocks every 100 block cycle. We couldn't find the root cause for this but after some investigation our best guess currently is that some validators change either only IP or only the enode when they change their setup (because of failover or just changing machine to update the other).

Maybe if one of those 2 scenarios, same enode but different IP or same IP but different enode, occur, the p2p mechanism is struggling. That's guesswork, though.

Btw the "address book" should be the

valEnodeTableInfowhich can be queried from a validator like this:istanbul.valEnodeTableInfo(in the geth console).@Alex | Easy2Stake: Thanks for the info, it takes us a little bit closer !

Continuing the guesswork, I looked inside the table. Let's take for example the "CLabs Validator #0 on baklava" validator:

0x0Cc59Ed03B3e763c02d54D695FFE353055f1502D: { // signer address enode: "enode://e1d5d31ecc05a4c582ccf82fd3ec32bf3c688708afbe0eb2fbda298edc987568997bae13d2c1bc5481258bfa7603869c78313b3386ec951a076298616c9908ce@35.185.222.207:30303", highestKnownVersion: 1602009476, lastQueryTimestamp: "2020-10-06 16:41:33.013579486 +0000 UTC", numQueryAttemptsForHKVersion: 0, publicKey: "0x03b669ae8afa902217245c212c2cf759a77126c0b989da87cb845bb67395446894", version: 1602009476 }The

valEnodeTableInfokeeps all the info under the signer address. Would make sense that if the validator will performkey rotationon the same "celo-node" the other peers should be somehow aware. If not, we will have something like this:0xNEW-VALIDATOR-SIGNER-KEY:{enode: "enode://e1d5d31ecc05a4c582ccf82fd3ec32bf3c688708afbe0eb2fbda298edc987568997bae13d2c1bc5481258bfa7603869c78313b3386ec951a076298616c9908ce@35.185.222.207:30303",....}Now we have two different SIGNER_KEYS with the same "enode" if key rotation is performed on the same machine. The unknown is how will the p2p mechanism handle it. Any ideas ?

@Thylacine | PretoriaResearchLab: I think this could be the solution.

Key rotation on first rotation to a new or backup server works fine as the celo folder / nodekey matches everyone's address book.

Key rotation on subsequent rotations to an existing server where you are burning the previous signing key (because you can't authorize the same key twice) requires you to delete the nodekey file in the celo folder. Keep the chainstate if you don't want to resync from genesis. How does that sound?

@syncnode (George Bunea): That is pretty correct from my previous tests.

Useful info

@medha | cLabs: Updated versions of the following packages were released last week: @celo/utils@0.1.21 & and @celo/celocli@0.0.58.

According to Shen | Bi23 Labs, the Celo Reserve increased by 91 BTC.

There is going to be a hard fork of Celo sometime by the end of the year:

@pranay: There will be a Celo hard fork closer to the end of the year. This is needed to make some non-backwards compatible changes such as adding more information to the epoch snark data (https://github.com/celo-org/celo-blockchain/pull/1158). This is still a couple months out at the very least, so as @syncnode (George Bunea) mentioned, there will be significant notice and information in advance, to understand what all changes will make it into the hard fork.

The current governance proposals and release 1.1.0 are not hard forks -- they are normal upgrades to the Celo core contracts and celo-blockchain client respectively.

One last thing re: the hard fork - as mentioned in Kuneco, it needs a name. The theme per the other networks is dessert or sweets that start with the letter "D". There will be a poll, so please if you have any good dessert names, please share

Community

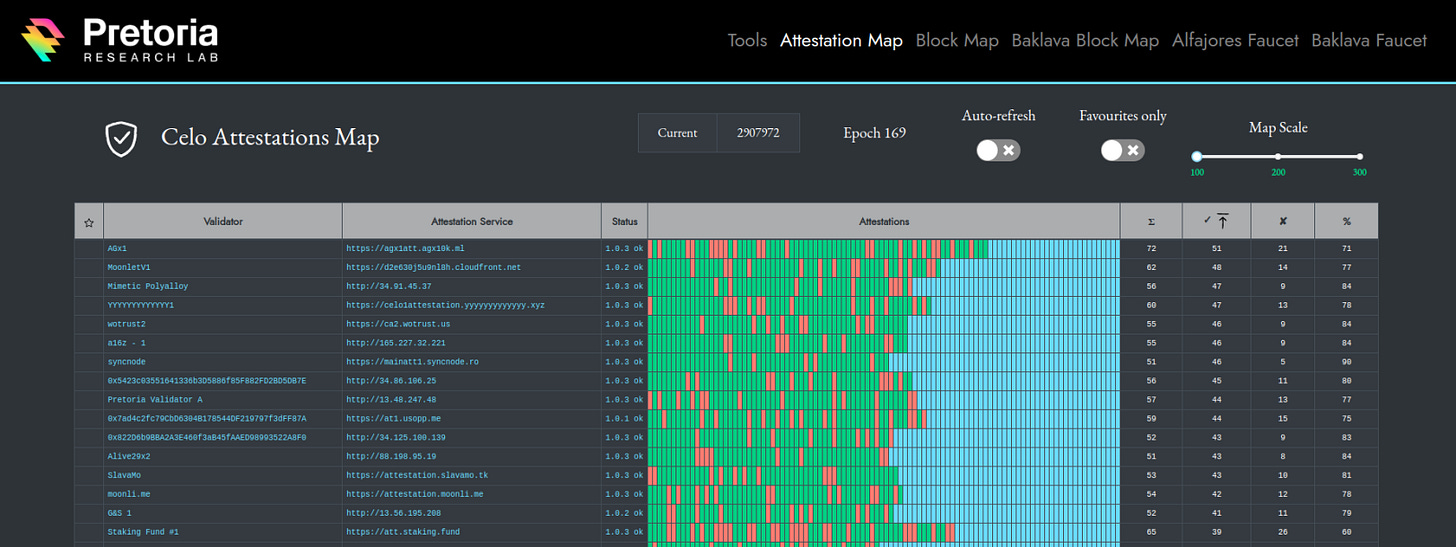

Thylacine | PretoriaResearchLab presented a new feature of the Celo Cauldron:

Hi everyone, I've released a new feature to https://cauldron.pretoriaresearchlab.io/attestations that allows us to visualize the growth and behaviour of attestations on the network.

I've parsed the requested, issuer selected, and completed events from the network from genesis to the current block. It updates every 2 minutes with any new data.

It also retrieves your current metadata and queries the health and status endpoints of your service.

Those of you who blocked these paths and/or added strict CORS settings made my life difficult.

Some tips:

Use the "Auto-refresh" slider to keep it constantly updating every 120 seconds

Hover over any individual attestation to get txId and other information

Hover over the "Status" cell to view your full status

Click on table headings to sort up and down

Click on the "Status" hyperlink in the cell to visit that service's status response directly

Looks best on 100 or 200 scale, 300 is a big squishy, don't even try on mobile

Future upgrades:

In the future I will try to hyperlink to the issuer selected event when clicking on an individual attestation cell

Will add in more detailed status information

Add in averages and better aggregates

Will start highlighting services running significant versions behind

Will choose a better layout once attestations and sign-ups use the available space

Include metrics on 1.0.5 new endpoints and restarts

Note:

There may be bugs, since the data model of the attestation events was extremely loosely related and it took a fair bit of fuzzy logic to tie them together. If you find something incorrect, please let me know here or raise an issue on GitLab. Any feature requests or feedback welcome!

@Patrick | Validator.Capital submitted a draft of the Celo Community Fund Constitution:

Here is a first draft of a Celo Community Fund 'meta-proposal' based on Ostrom's design principles. Anyone who wishes to provide input or suggest changes can comment here or within the document. Once we get to a final draft, then I will submit it as a Celo Governance Proposal for ratification. I'm hoping to submit it within 7-10 days.

https://docs.google.com/document/d/15xkmj6mXaLAjNcdvGX3PKGM3aanuvVL1IYXeWHvfxBs/edit?usp=sharing

...

We will be hosting a Community Fund call on Thursday Oct. 15th at 9am PT to discuss the Community Fund 'meta-proposal'. If you'd like to have a say in how the Community Fund governance will work or the sort of projects that it will focus on, then please try to join or comment on the proposal itself.

Meeting Link: https://meet.google.com/opi-odpo-xai

Like what we do? Support our validator group by voting for it!