Celo Discord Validator Digest #21

Emergency upgrade to version 1.1.1 on mainnet, missed attestations, missing signatures after upgrade to version 1.2.* on Baklava, useful info and community updates.

One of the troubles we have faced as a validator for Celo is keeping up with all the information that comes up in the Celo's Discord discussions. This is especially true for smaller validators whose portfolios include several networks. To help everyone stay in touch with what is going on the Celo validator scene and contribute to the validator and broader Celo community, we have decided to publish the Celo Discord Validator Digest. Here are the notes for the period of 9-22 November 2020.

Discussions

Emergency upgrade to version 1.1.1 on mainnet

Following the announcement to upgrade nodes to the version 1.1.1, several validators upgraded on-the-fly and had to face downtime of various lengths:

@zviad | WOTrust | celovote.com: Overall though, I would say even minor version upgrades are pretty dangerous in-place. If things go well, they go well and that is fine, but considering there are still so many "not so clear" ways local chaindata can get corrupted and cause large downtimes, I would say key rotation is really the only safe way to go.

...

The announcement also encourages to upgrade to 1.1.1, in-place. This is maybe ok if you are already running 1.1.0, but going from 1.0.x -> 1.1.1 in-place seems like playing with fire. There are pretty significant changes in there to recommend in-place upgrading.

Considering at least 50% of the people are still running 1.0.1 (or 1.0.0 still!), blanket recommendation to upgrade to 1.1.1 in-place will probably cause more harm than benefit imo.

Most likely, the issues were caused by using expired variables in startup scripts:

@zviad | WOTrust | celovote.com: Assuming people had setup their startup scripts based on the old documentation, it had something along the lines of :

BOOTNODE_ENODES=`sudo docker run --rm --entrypoint cat $CELO_IMAGE /celo/bootnodes`sudo docker run ... --bootnodes=$BOOTNODE_ENODES ...I wouldn't be surprised if this trips up a lot of people when going from 1.0.x directly to 1.1.x. Because now with 1.1.x first command will just fail, causing their startup script to exit with error. Of course it is possible to upgrade safely to 1.1.x in-place. And if nothing goes wrong it should be pretty fast. But there are definitely plenty of ways to screw it up, unless you are being pretty careful.

The upgrade also included a new flag --light.serve:

@Thylacine | PretoriaResearchLab: ... Also added in the new parameter

--light.serve 0which appeared in the docs.@Joshua | cLabs: It's a pretty recent addition. It was added b/c the default light.serve is 50

...

The only change from 1.1.0 to 1.1.1 in the start command is the optional (but recommended) addition of

--light.serve 0@mbay2002 | Qoor: From the geth docs:

--light.serve value Maximum percentage of time allowed for serving LES requestsShould this only be set on validator and proxy, or should any full node have this? If everyone sets this to zero, who will serve light clients?

@zviad | WOTrust | celovote.com: Just on validator and proxy. Full nodes should probably allow even more percentage to serve light clients if they are feeling generous.

Docs here: https://docs.celo.org/getting-started/mainnet/running-a-full-node-in-mainnet#start-the-node recommend 90% for full nodes (i.e. --light.serve 90).

@Joshua | cLabs: This is specifically for proxies and validators not behind a proxy. Right now it probably doesn't have a big impact given (currently) smaller number of light clients, but it's to prioritize participating in consensus for validator. cLabs currently runs a number of nodes dedicated to serving light clients and is exploring incentives for full node operators to serve light clients.

@mbay2002 | Qoor: ... So technically attestation nodes (and account nodes) are full nodes, couldn't an argument be made that attestation nodes should not be serving light clients? Or should they?

@asa | cLabs: I would agree with this, no reason for those nodes to serve light clients.

The docs should probably be updated to highlight the difference between a full node that serves light clients and one that doesn't.

Missed attestations

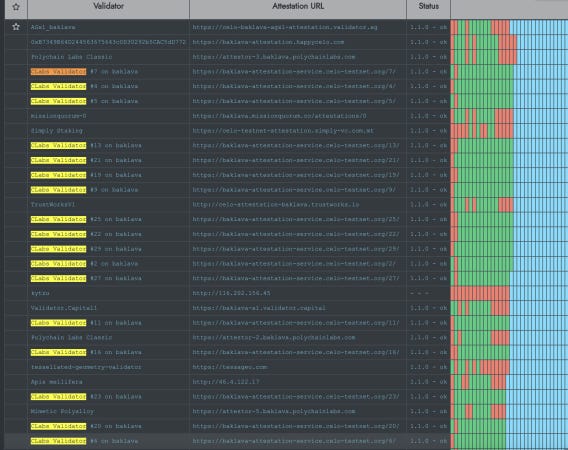

The reported increase in failed attestations in Baklava led to suspicion that the error might be on the cLabs side:

@ag: Curious why in baklava all recent clabs attestations are OK but all recent non-clabs attestations are failed:

...

Can't be coincidence here - 100% fail for non-clabs attestations and 100% success for clabs.

@timmoreton | cLabs: This is definitely interesting. Looking at a handful of metrics from non-cLabs attestation services, I see a lot of these 30008 Twilio errors:

attestation_attempts_delivery_error_codes{provider="twilio",country="GB",code="30008"} 4The Attestation Bot is creating Twilio phone numbers in the same account as the cLabs validators use, so I wonder if it's some issue in how we create those numbers in Twilio.

@aslawson | cLabs: We've identified the issue with the attestation bot is most likely due to cLabs Twilio configurations. The cLabs Baklava sender numbers (used for cLabs Baklava attestation services) and the receiver numbers for the bot are in the same account. The bot pulls a number from the full pool and if there are no attestations attempted with it onchain, it will attempt to use that for the receiver number. However, our "senders" are not configured correctly for it to be successful so these will never have attestations. Our bot has run out of correctly configured numbers, is attempting to use the sender numbers, and it will not automatically create new numbers while these others exist in the pool.

We are looking into separating the accounts, so we shouldn't need a fix on the attestation service itself. Stay tuned.

Meanwhile, the attestation error rate on mainnet also spiked:

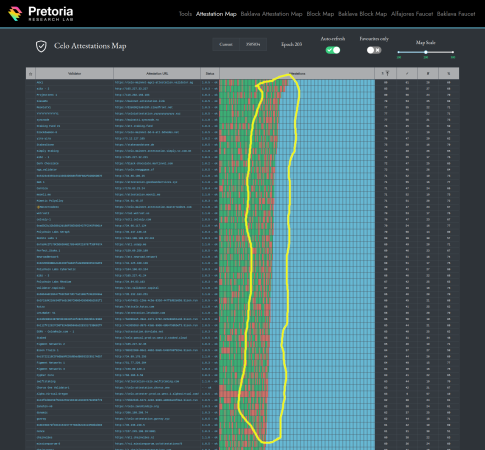

@Thylacine | PretoriaResearchLab: I haven't done the maths, but visually it looks like the density of failed attestations is on the rise. Am I seeing things or is the failure density higher of late? This is mainnet. Personally, I've failed 11 of the last 16 attempts.

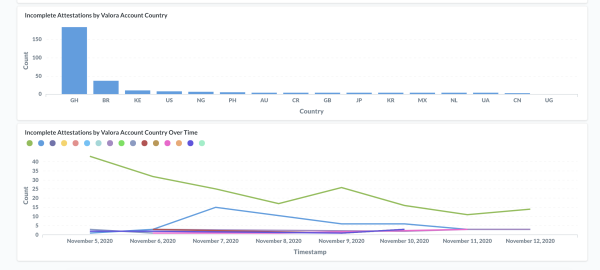

@aslawson | cLabs: After some digging, it looks like a couple things are impacting the mainnet completion rates:

The main reason appears to be a large increase in Valora traffic from Ghana -- apparently in some cases providers are claiming they are

DELIVEREDwhen they are not OR the user(s) in the region are intentionally not completing perhaps for testing purposes. For this, we are looking into providers that would improve delivery rate for this and other countries/carriers. We also believe activating the feature for sending the 8-digit security code texts will help as it has shown in testing to increase deliverability (this functionality is already released in latest attestation service version).There was some internal testing ~20 requests for our feeless attestation service that were intentionally not completed (should not continue to be an affect).

There is a bug in the feeless attestation flow that has an issue with completion transaction race conditions (minor impact -- fix is in progress).

If people like visuals, I just pulled some graphs together. It appears that in the past 7 days, 184 incomplete attestations came from Ghana. Though it appears to be trending down.

Missing signatures after upgrade to version 1.2.* on Baklava

Several validators experienced uptime issues after upgrading their nodes to the version 1.2.1 on Baklava:

@ag: Rotation from 1.1.0 to 1.2.0 + 3 proxies went unpretty.

After epoch started, the new signer started receiving

Elected but didn't sign blockWARNings. I have waited about 300-400 blocks, flipped signer key to old proxy+validator. after some lag (several minutes) it started signing.... stopped it, started back to new validator with 3 proxies.Never had any problems with rotations so I'm shocked to say the least. For this time I'll just assume that I have messed something up starting 3 proxy setup, and just leave this semi-report here in case anybody has something similar...

...

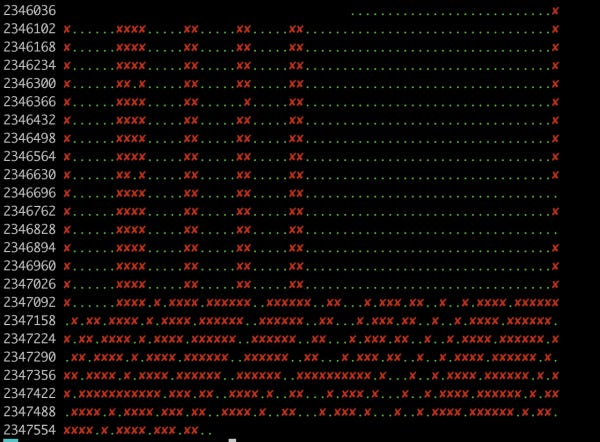

Gets even worse after testing the hotswap:

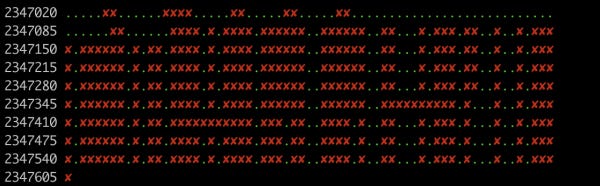

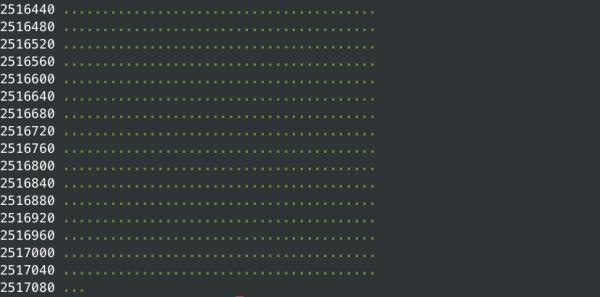

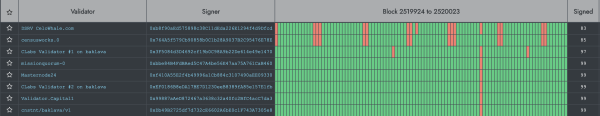

celocli validator:signed-blocks --signer 0x6511e5a75540edFe38334226f041Ea068e9ac299 --lookback=1500 --width=66Note the 66 width. It's current set size 70 minus 4 offline validators (I assume so)

You will guess yourself when I did a hotswap.

With

width=65it looks better - like they are excluding me.@james | censusworks: It's quite a brutal time on Baklava right now. I got paged on censusworks not signing blocks after key rotation but it appears that not signing blocks is very common.

https://baklava-celostats.celo-testnet.org/ isn't that helpful right now re showing version numbers but it appears maybe that the validators that are struggling are on 1.2.1.

Of course that could also be that they've been recently rotated and the version number is a red herring.

The situation seemed to have improved for some validators after the 1.2.2-beta release:

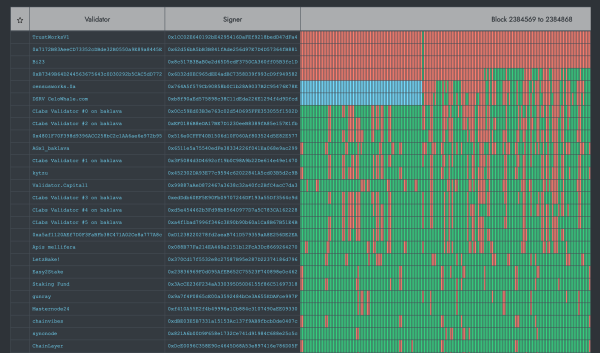

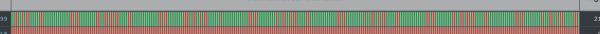

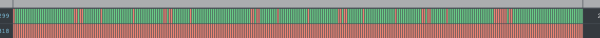

@ag: Looks much better after 1.2.1->1.2.2-beta (though maybe it's just a restart?)

1.2.1:

1.2.2-beta:

1.2.2

However others still experienced issues with block signing:

@mbay2002 | Qoor: ... I updated to 1.2.2-beta but it didn't change anything.

@james | censusworks: Yep, I'm on 1.2.2-beta now and still have the same problems.

Useful info

There was some reorganization of channels in Celo's Discord, and the

#validatorshas been renamed into#general-operations.

Following the Governance proposal 13, slashing is now active on mainnet:

@zviad | WOTrust | celovote.com: Current Governance proposal 13 deploys new slashing contract that will actually work, unlike previous one that wasn't working due to very large gas requirements.

Penalties are accurate, most important penalty being: half epoch and voter rewards for 30 days.

...

Also half voter rewards for 30 days will most likely mean losing all votes from other users. So for non self funded validators, that is most likely going to be end of their career.

The minimum amount of votes required to elect a validator has surpassed 2 million:

@Patrick|Validator.Capital|Moola: We've crossed the 2m vote threshold for electing a validator:

Minimum votes for group of size N:V(1): 2,000,276

Note on the use of the

--istanbul.replicaflag in version 1.2.*:

@Marc | LetzBake!: Ok, I checked the state of the other validator and it showed as being the replica, although it was not started with the respective flag. But I previously set it to replica in geth. Seems that I do not yet fully understand the new replica/hotswapping functionality in 1.2.x.

So the replica state is preserved also when stopping and removing the validator in docker, and then restarting it also without the --istanbul.replica flag? The same seems to be the case with the validator that I started with the replica flag, but it starts in primary mode nonetheless.

Do the two validators somehow communicate their state so to ensure that one is always the primary and one the replica to prevent slashing from double-signing by at the same time protecting uptime?

@Joshua | cLabs: The validators do not communicate their state to each other. The replica state is preserved in

datadir/replicastate. Nodes will preferentially use that over the command line (to protect against a node restarting and accidentally coming up). Right now to sync up a replica you need to make sure that it was started with--istanbul.replicaat the first startup the while syncing. If you copy over an existing data-dir, you can only take the chain data or if copying the entire data-dir, you can remove thereplicastatefolder on the replica and start it with--istanbul.replica.

Community

Mariano | cLabsshared a healthchecker for validator nodes:

If someone is interested in the

golangway usingkliento. We use a very simple healthchecker on testnets to check signatures https://github.com/celo-org/kliento/blob/jcortejoso/health_checker/examples/health_check/main.goI enjoy not having to deal with abstraction layers, and just get "the block header".

Thylacine | PretoriaResearchLab shared a guide on adding TLS to attestation node:

https://gist.github.com/aaronmboyd/ad17e1cec27da3321c78ff5ac5a1f9c1

Some new updates have been pushed to Cauldron:

@Thylacine | PretoriaResearchLab: Hi folks, I've added some new features:

TLS indicator

Summaries / average row

Increased minimum width of map to 150 now that some are approaching 100

Like what we do? Support our validator group by voting for it!